6 ways to increase energy efficiency in data centers

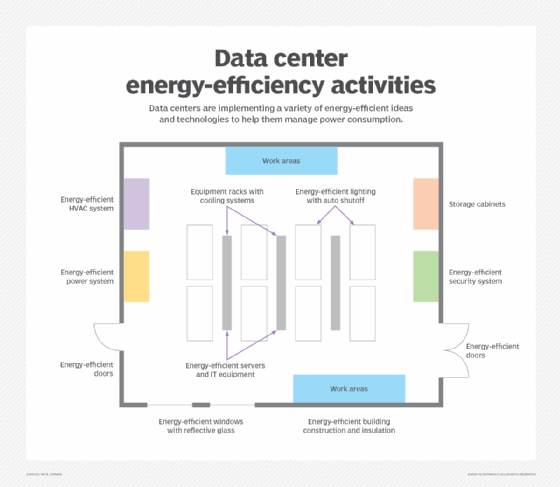

Although there is no one single trick that admins can use to achieve an energy-efficient data center, there are several small things that can significantly decrease energy use.

Infrastructure power requirements can drive up operating costs. Data center managers can cut their utility bills if they address power needs for CPUs, storage and cooling systems.

Utility bills are no small data center expense. As part of the push to address IT spending, data center managers and organizations continue to look for ways to drive down data center energy costs and increase overall energy efficiency.

Because there are so many infrastructure components that use electricity, managers have several options for increasing data center energy efficiency. Some of these options include adjusting fan speeds, storage hardware, cloud infrastructure use and even operating temperature. These small changes can collectively reduce data center power consumption, resulting in significant energy savings.

In spite of the huge potential for efficiency gains, admins should spell out any risks and get executive support before pursuing any of the following tactics to reduce data center power consumption. Without proper planning, high-density, highly efficient infrastructure can make a data center go thermal in seconds.

1. Switch to variable-speed fans

One way to decrease energy usage in the data center is to switch to variable-speed fans. Recent research has found that power consumption can be decreased by 20% through CPU fan speed reduction. As such, organizations should use variable-speed fans to cool data center equipment. These fans only consume power when they run, and they only run at required speeds that are based on sophisticated thermostatic measures. Because these fans slow down during low-CPU utilization, they quickly decrease power usage with each non-turning blade.

Don't stop with servers; check the cooling features of uninterruptible power supply devices and the power supplies of various appliances on the same power grid, plus any other hotspots that might have a fan spinning.

2. Use liquid cooling

Another way to reduce power consumption -- particularly for high-performance hardware -- is to adopt liquid cooling for CPUs. Instead of fans that blow air across a heat sink, liquid cooling works similarly to a car's radiator, using liquid to dissipate heat.

Liquid cooling is widely regarded as being more effective than air-based cooling methods, and, depending on the application, it might have the additional benefit of reduced noise. Although the pumps used for liquid cooling consume some power, liquid cooling units help CPUs run cooler, which can help reduce the energy required to cool the data center.

3. Raise the temperature

Another way to achieve an energy-efficient data center is to reevaluate the optimal data center temperature. In the past, data centers always had to be kept cool in order for computing hardware to function correctly. More recently, equipment vendors have been designing systems that can operate at higher temperatures. According to data center infrastructure suppliers, modern servers can perform well up to 77 degrees Fahrenheit. Even so, some data centers operate servers closer to 65 degrees Fahrenheit.

If admins raise the ambient temperature a few degrees, there can be an immediate drop in power usage from the cooling system without any effects on server performance. There's no overhead or investment needed, although close temperature and server monitoring -- as well as a pilot program -- are advisable in order to avoid unpleasant surprises.

Admins shouldn't haphazardly raise data center temperatures. Guidelines from ASHRAE provide recommended operating standards for energy consumption, temperature and humidity control.

4. Use bigger, slower drives

Using bigger, slower drives can help, but this shouldn't be done for high-demand transactional processes such as financial databases or critical 24-hour systems. If admins delegate a percentage of mostly unused files to a lower storage tier, they will be able to replace faster units with low-energy-demand drives. In turn, fewer drives burn less energy, creating less heat. This can be an expensive undertaking, but as most organizations build out more storage every quarter, it can be a worthwhile investment.

Organizations should also use the power management profiles of the OS to put hard drives into standby mode when they aren't actively in use. This reduces power consumption and prolongs the hard drive's lifespan.

5. Switch to SSDs

Organizations should also consider replacing hard disks with SSDs where it's practical. SSDs generally consume far less power than hard disks and deliver a greater number of IOPS.

For example, Samsung's enterprise SSDs consume only 1.25 W of power in active mode and 0.3 W when idle. This is roughly one-fourth of the power consumed by a 15,000 rpm SAS hard disk drive, which consumes about 6 W of power per drive. Plus, SSDs don't have any moving parts, which means they produce significantly less heat than hard disks.

6. Use cloud-based services

Though moving IT workloads to a cloud or colocation provider externalizes the power consumption to the host site, many organizations concede that big vendors are experts at squeezing the most out of each kilowatt. Hosted services providers often focus on delivering the best value for power at a lower cost for their customers.