Kadmy - Fotolia

Develop a 7-step server maintenance checklist

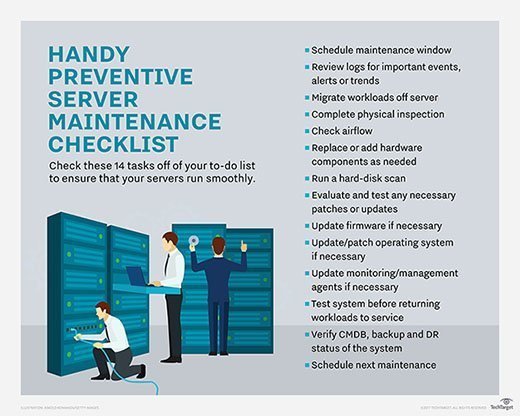

For effective server maintenance, admins must perform proactive hardware and software checks. Any list must include dusting, log review and software patch tests.

Even with the performance and redundant features of servers, increased workload consolidation and reliability expectations can take a toll on server hardware.

A server maintenance checklist should cover physical elements as well as the system's software layer configuration. It must also account for the fact that thorough upkeep takes time, person hours and testing. Using a checklist helps admins define their goals and keep IT teams on track.

1. Develop a maintenance routine

Server administrators too often overlook planning maintenance windows. Don't wait until there is an actual failure; set aside time for routine server preventative maintenance.

Maintenance frequency depends on the age of the equipment, the data center and the volume of servers requiring maintenance. For example, older equipment located in an equipment closet needs more frequent inspections than new servers deployed in a high-efficiency particulate air-filtered, well-cooled data center.

Organizations can base routine maintenance schedules on vendor or third-party provider routines; if the vendor's service contract calls for system inspections every four or six months, follow that schedule.

2. Prepare for downtime

Have a plan before you tackle the items on a server maintenance checklist. This includes checking the system logs for any errors or events that require more direct attention. If system logs denote errors with a specific memory module, you should order a replacement dual in-line memory module (DIMM) and have it available for installation. Similarly, if there are firmware, OS or agent patches/updates available, test and vet those first before the planned maintenance window.

Have a clear, set plan for taking the system offline and returning it to service. Before virtualization, the server and its resident application would require downtime to accommodate the maintenance window -- forcing admins to perform maintenance at night or on weekends.

DOWNLOAD A PDF OF THIS SERVER MAINTENANCE CHECKLIST

Virtualized servers enable workload migration instead of downtime, so admins can migrate applications to other servers and they'll remain available whenever server maintenance occurs on the underlying host system. Before service, know where the virtual machines should go, migrate VMs to selected systems and verify each workload is functional before taking the server down for maintenance.

At this point, admins can shut down the server and remove it from the rack.

3. Inspect airflow paths

Once a server is offline, visually inspect its external and internal airflow paths. Remove any dust accumulation and debris that can impede cooling air.

Start with the exterior air inlets and outlets, then proceed into the system chassis, looking at the CPU heat sink and fan assemblies, memory modules and all cooling fan blades and air duct pathways. Be sure to clean the server with it removed from the rack. Remove dust or debris on an appropriate, static-safe workspace with clean, dry compressed air.

Dust-busting isn't a new process, but it's still necessary. Dust is a thermal insulator, making it all the more important to remove it, now that alternative cooling schemes and ASHRAE recommendations have raised data center operating temperatures. Dust and other airflow obstructions cause the server to use more energy and can even prompt avoidable component failures.

4. Check local hard disks

Servers rely on internal hard disks for booting, workload startup and storage, and user data. Disk media problems hurt workload performance and stability and lead to premature disk failures. Use tools such as the Check Disk utility to verify the disk's integrity and attempt to recover any bad sectors on it.

Magnetic media isn't perfect; common problems include bad sectors and fragmentation. RAID goes a long way toward preserving data integrity in the wake of storage errors, but smaller, 1U rack servers don't provide enough physical space to deploy an array of disks.

Disk fragmentation simply won't go away, as long as the NT file system and file allocation table, file systems use disk space by first-available clusters. Fragmentation can slow down a server's disk and cause failures. The Optimize-Volume utility Windows Server 2016 defragments, trims and conducts storage tier processing.

5. Verify log data and events

Servers record a wealth of incident information in event logs. No server maintenance checklist is complete without a careful review of system, malware and other event logs. Sure, critical system issues should attract the attention of admins and technicians right away, but countless minor issues can signal chronic problems.

While examining logs, admins should check the reporting setup and verify the correct alert and alarm recipients. For example, if a technician leaves the server group, they'll need to update the server's reporting system.

Double-check the contact methods, too; a critical error reported to a technician's company email address is moot if the error occurs outside of business hours.

When log inspection reveals chronic or recurring issues, proactive investigation can resolve the problem before it escalates. If the server's log reports recoverable errors in a memory module, it will not trigger critical alarms. But if there are repeated instances signaling problems with the module, admins can perform more a more detailed analysis to identify impending failures.

If the problems are not severe enough to shut down a server, admins can return the server to production until replacement hardware comes in.

6. Test patches and updates

The server's software stack -- BIOS, OS, hypervisors, drivers and applications -- must all work together. Unfortunately, software code is rarely problem-free, so pieces of this puzzle are frequently patched or updated to fix bugs, improve security, streamline interoperability and enhance performance.

No production software should have automatic updates. Administrators should determine if a patch or upgrade is necessary, then thoroughly evaluate and test the change.

Software developers cannot possibly test every potential combination of hardware and software, so choose patches and updates wisely to avoid performance issues or workflow interruptions. For example, a monitoring-agent patch could cause problems with an important workload because the new agent takes more bandwidth than expected.

The shift to DevOps, with smaller and more frequent updates, increases the potential for problems. Organizations still must test any patch or update in a lab before deploying it in a sandbox or test setup and always have the ability to restore the original software configuration.

7. Record any system changes

A lot can happen to a server during a maintenance window such as hardware, software or system configuration changes. When admins have completed the server maintenance checklist, it's vital for them to double-check and record any new system state. For example, changing a network adapter, adding or replacing DIMMs or updating an OS alters the system's configuration.

Organizations that depend on system configuration management tools may need to update or discover any changes -- recording those changes to the configuration management database before the system is allowed back into service. Admins must update any enforced or desired state configuration posture to allow the changes.

Also verify system security postures such as firewall settings, antimalware versions or scanning frequency and intrusion detection settings. Security checks ensure that system software changes did not inadvertently expose any attack surfaces were closed in the prior configuration.

Don't forget to update any system backups or disaster recovery (DR) content once the server is back online.

Verify that the server's backup/DR frequency remains unchanged, unless any related settings specifically must be adjusted to reflect the server's new use case.