Traditional vs. converged vs. hyper-converged infrastructure setups

HCI clusters, converged infrastructure and rack servers all offer benefits for a data center. Still, figuring out which is easier to set up requires evaluation and planning.

Your specific requirements should drive the preferred architecture for your on-premises virtualization platform. Sometimes the virtualization platform must be unique to the business, and, other times, a more generic platform is enough.

If the virtualization platform requires customization for your business, you probably want to choose every component. When the specifics of the virtualization platform aren't business differentiators, you will probably be best served with a predefined selection of components.

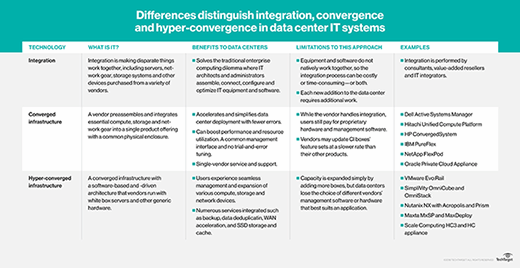

Whether it's a traditional server build, a converged Infrastructure rack or some hyper-converged infrastructure nodes, there are options to suit your data center virtualization needs. Here, we'll compare traditional vs. converged vs. hyper-converged infrastructure options.

Traditional server architecture

With traditional infrastructure, the best option from each category of component is selected and possibly purchased independently of every other category. Categories include servers, storage arrays and networks, but decisions about individual adapter cards and even cables are required.

Often, a different category is purchased each financial year to spread the replacement cost for the complete infrastructure over three to five years. One year a new set of servers is purchased and installed, another year a new storage array and yet another year a new data center network. With this model, customers are responsible for selecting the right combination of components and ensuring that all the parts work together.

Traditional infrastructure gives the most customer freedom, along with the most work and potential issues that must be handled by the customer. One customer I worked with was upgrading the version of the hypervisor in an existing environment; the updated network card drivers caused incompatibility between the exiting network adapters and the existing Network File System. Resolving the issue was the customer's responsibility, as they chose each part and the upgrade to a new hypervisor version.

Converged infrastructure

Converged infrastructure (CI) is a purchasing and maintenance model where a collection of components are purchased as a complete system from one vendor. Every server, network adapter and cable is included down to the last power cable.

The parts are the same parts that might be bought as traditional infrastructure, but now the vendor validates that all the hardware and software components will work correctly together. The CI product is sold as a single item with all the components. Usually, the only option is the size of infrastructure in fixed small, medium and large options.

Upgrades to deployed CI are also the vendor's responsibility; the vendor validates the specific versions of drivers, firmware and hypervisor that work together. Converged infrastructure usually requires an ongoing involvement of the vendor in keeping the deployed infrastructure operational.

One CI customer was a large international manufacturer; they had the same medium-sized CI at each manufacturing plant and a larger size at each of the main corporate offices. Having the vendor deliver consistent services around the world enabled the company to keep a leaner IT team. However, every change to the CI had to be completed by the vendor and was a chargeable activity.

Hyper-converged infrastructure

Hyper-converged infrastructure (HCI) has the objective of making infrastructure as invisible as possible. The usual HCI architecture is to use local storage in the physical servers to form a scale-out storage cluster for virtual machines; the same physical servers run a hypervisor to form a scale-out compute cluster for the same VMs. Some platforms enable compute-only or storage-only servers that contribute to only one cluster.

Most HCI products provide servers and storage; customers must still provide their own networking equipment. HCI products also simplify and automate update processes, usually enabling customers to manage their own updates with a simple web interface. Customers who love HCI often enjoy not spending time managing their virtualization infrastructure, preferring to focus on their VMs and applications.

Traditional vs. converged vs. hyper-converged

Traditional infrastructure provides the greatest flexibility and requires the most work to design, deploy and operate. A customized set of requirements will lead to needing a customized traditional infrastructure.

Converged infrastructure provides a choice of fixed configurations and places the complexity on the CI vendor. A large-scale requirement for infrastructure with maintenance outsourced will suit a CI system.

Hyper-converged infrastructure provides a nearly invisible infrastructure; most of the complexity is hidden inside the HCI platform. A requirement for general-purpose infrastructure with minimal ongoing maintenance will suit HCI.

Advantages of hyper-converged infrastructure

- Simple deployment. Most HCI platforms can go from cardboard boxes arriving in your loading dock to working virtualization infrastructure in a morning.

- Simple operations. Operational tasks are focussed on VMs rather than infrastructure. Performance and data protection are often simply policies that are applied to the VM.

- Simple growth. HCI grows with a scale-out model where new physical servers are added to increase the capacity of the HCI cluster. Most HCI combines storage capacity and performance with servers, so storage capacity and performance increases when VM compute capacity increases.

- Low cost of entry. Most HCI platforms only require three physical servers to get started, a far lower price than even the smallest converged infrastructure deployment.

Disadvantages of hyper-covered infrastructure

- Simple options. Many HCI servers have limited capacity for expansion cards, so needing GPUs for VDI or machine learning might limit your HCI options.

- Small servers. HCI platforms usually use dual-socket servers. If you have unusually large-scale requirements, you might want larger servers.

- Virtualization only. Often the HCI storage is only available within the HCI platform. If you have requirements for shared storage attached to physical servers, then you might still need a storage array outside of HCI.

- Limited scaling. HCI platforms replicate storage between servers for high availability. This replication can be limited by network bandwidth between servers leading to lower than expected storage performance with large clusters. Many HCI platforms have best practice maximum cluster sizes that are smaller than the maximum cluster sizes supported by the hypervisor.

- Budget cycles. HCI is usually cost-effective when you buy new HCI nodes as your workload grows, rather than buying three years' worth of capacity upfront. Most enterprise organizations have budget cycles that expect fixed spending each year and might not adapt well to HCI's growth patterns.

Choosing the best server for your business

As you are choosing a virtualization platform, think about whether you have special requirements and how much you want to manage the platform. Start by evaluating HCI options that require the least of your attention over time. If you can't satisfy your requirements with HCI, then think about whether converged infrastructure suits how you operate your infrastructure.

If you must go with a traditional infrastructure, look for vendor reference architectures to be the basis for your infrastructure and modify the design to suit your requirements. Choosing every component for yourself should be a last resort for when your needs are unusual or where the design of the virtualization platform is your unique business value.