WavebreakmediaMicro - Fotolia

Want an HPC data center? Colocation could be the answer

Colocation can offer the space, HVAC and security an organization needs to implement high-performance computing and delve deeper into its data. But not every provider is a match.

Once limited to scientific research, high-performance computing is a more viable option for many enterprise applications as organizations turn to HPC for deeper data insights. But an HPC data center doesn't come cheap. An organization must acquire and manage the hardware, but also have the facilities to house the equipment.

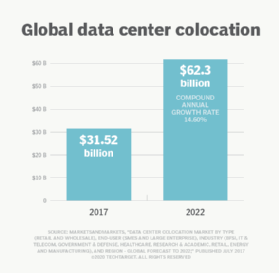

To reign in facility costs, some organizations are turning to colocation data centers that provide leasable space for housing IT equipment, which can lower costs and reduce operational overhead.

The promising world of HPC

An HPC system can perform more than a teraflop of calculations per second, which comes to 1 trillion floating-point operations per second (FLOPS), which is typical of scientific calculations. This level of processing power makes it possible to run complex compute operations quickly, efficiently and reliably. An HPC system can -- and often does -- consist of multiple server nodes networked into a single cluster that supports parallel processing.

An HPC cluster is better suited to organizations that can't afford the supercomputer price tag, enabling more organizations to enter the HPC arena. Even so, the number of cluster nodes can still run into the thousands, depending on the applications and amounts of data, which translates to a lot of data center space.

The processing power inherent in an HPC system makes it suitable to a variety of industries and institutions, whether governments, financial services, natural resources, manufacturing, or media and entertainment. Engineers, for example, can use HPC to verify structural designs, test prototypes or perform simulations.

To carry out these operations, organizations need HPC systems made up of interconnected compute, storage and network resources that can perform trillions of calculations per second. The components must operate as a single integrated unit, even if they number in the thousands.

Imagine how much space it takes to house an HPC data center cluster with several thousand nodes. For the few organizations that have the space and infrastructure in their own data centers, the effects may be minimal. Their hardware ability and IT teams would still be affected, but not to the degree of organizations that don't have any of these resources. For many of them, the lack of physical infrastructure could make HPC impossible -- if not for colocation.

Colocation to the HPC rescue

In addition to the square footage, a colocation facility typically provides power, cooling, bandwidth and physical security. Customers can lease space by the rack, cabinet, cage or room, depending on the data center.

Many colocation data centers can now accommodate HPC systems, support denser rack spaces and offer more power per rack, up to 50 kW in some cases. They also provide ventilation and cooling systems to support the high-density equipment, along with security and power backups. With such facilities, organizations that have traditionally shied away from HPC now have more options for high-performing system implementation.

In a traditional colocation model, customers own and operate their own equipment. The provider ensures the facility remains secure and operational but typically leaves the hardware alone. Some facilities might offer optional management services, but this is always for an extra fee, added to the costs of leasing the space. Some providers rent the space and allow clients to bring in their own hardware.

Colocation facilities can help lower the operational expenses that come with HPC data center operations, which require a secure, climate-controlled space in a facility that can operate all day, every day, without interruption.

In addition to the space itself, a colocation facility provides the operational infrastructure, as well as trained personnel to handle cable installation, power supply maintenance and HVAC system upkeep.

A colocation data center houses equipment in a facility specifically designed for reliability, efficiency and security, which are paramount to HPC system hosting. Most organizations would have a tough time achieving the same quality environment on their own.

Colocation can also simplify the process of IT resource distribution across geographical regions when trying to accommodate data and user workflows. This setup makes it easier to scale systems as needed, without putting an undue burden on internal IT resources.

Vetting HPC colocation providers

A number of providers, such as Advania, Colovore, Hydro66, ScaleMatrix and Verne Global, now offer HPC-friendly data centers. However, not all facilities are the same, and IT teams should consider several important factors as they vet HPC colocation providers.

A top consideration is location. Because colocation customers often manage their systems, they need to be able to physically access equipment, as well as remotely connect. If the IT team requires regular physical entry into the HPC data center, the cost-savings that come with a more remote location might pale in comparison to all the extra travel miles.

Distance also plays a role in performance, depending on where the data originates and the nature of the applications. The facility should be in an area that best meets application and workflow requirements. At the same time, an organization should contemplate whether it is worth the risks of using a colocation facility in an area prone to disaster.

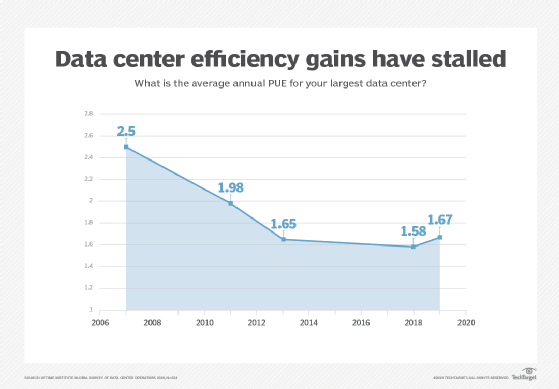

Managers should confirm the facility can provide the necessary power and cooling densities to meet the demands of an HPC system and evaluate the facility's power usage effectiveness (PUE) rating. Whether the data center runs HPC systems or more traditional workloads, the lower a PUE rating is, the better it is in terms of costs and environmental effects.

There should be backup systems in place to protect systems in the event of power failures. This might include backup generators as well as redundant equipment such as utility feeds, transfer switches, power distribution units or uninterruptable power supply appliances. Backup systems should provide enough power to HPC systems to shut them down safely to prevent components from damage and to protect data from loss or corruption.

Terms to know when vetting HPC colocation providers

Power usage effectiveness. PUE determines data center energy efficiency. It can be calculated by dividing the amount of power entering a data center by the power used to run the computer infrastructure within it. The lower a PUE rating is, the better it is in terms of costs and environmental impact.

Power distribution unit. A PDU controls the electrical power in a data center.

Uninterruptable power supply. A UPS device enables a system to run for at least a short period of time when the primary power source is lost. It can also provide protection from power surges.

Above all, organizations should assess what safeguards the colocation facility has in place to ensure the HPC system is fully protected. For example, what steps does the provider take to prevent unauthorized individuals from accessing customer systems or the building as a whole? Other factors to evaluate include the ability to scale systems, whether the facility is network carrier-neutral and what data backup systems are in place. Finally, check for other factors that could impact or streamline HPC data center operations, such as Wi-Fi access, technical staging areas, on-site parking, meeting spaces or the availability of loading docks.

Before signing with a vendor, carefully review the service-level agreement (SLA), especially as it applies to the guaranteed percentage of uptime and what steps will be taken if there's a problem. The SLA should detail security protections so the organization and IT teams understand exactly how their systems will be safeguarded. Review terms of recuperation so you know your rights if the provider does not meet the terms of the SLA.

HPC + colocation = reliable deployment

An HPC system can make it possible for an organization to derive important insights from its data, regardless of the type of industry or use cases. But the HPC equipment must be housed in a data center that is highly secure and reliable, which can be an expensive proposition.

For many organizations, the only viable option is to implement their HPC systems in colocation data centers. Even if an organization has its own facilities, the space might not be suited to high-density HPC equipment or there might not be room to add more equipment.

When HPC systems are housed in a qualified colocation data center, an organization gets more than just floor space. It obtains a facility that has been designed to provide the most secure and reliable HPC data center possible.

Dig Deeper on Data center ops, monitoring and management

-

![]()

University of York shifts HPC workloads to Swedish EcoDataCenter colocation site in green IT push

-

![]()

Imperial College London teams up with Intel and Lenovo for HPC push

-

![]()

Kao Data expands Harlow datacentre campus by opening second 10MW facility

-

![]()

Kao Data to expand datacentre footprint by opening 16MW facility in Slough