Denser is better: Increased storage and server density

For storage and servers, being dense is good. Careful planning can maximize the benefits of greater computing densities while minimizing potential risks.

As next-generation data centers continue to shrink, IT professionals must look ahead to reap the benefits of greater server density and storage density while mitigating the associated risks and problems.

Modern data centers are profoundly affected by computing densities. The ongoing evolution of computing hardware, system form factors, networking tactics, virtualization and supporting technologies have allowed far more enterprise computing with just a fraction of the physical space.

Increased densities allow for smaller capital purchases and lower energy bills. But there are downsides to increased computing densities, namely potential management challenges and greater system hardware dependence.

Ever-denser hardware

The impact of increasing density is most obvious in the deployment of computing hardware, such as servers, networking and storage devices.

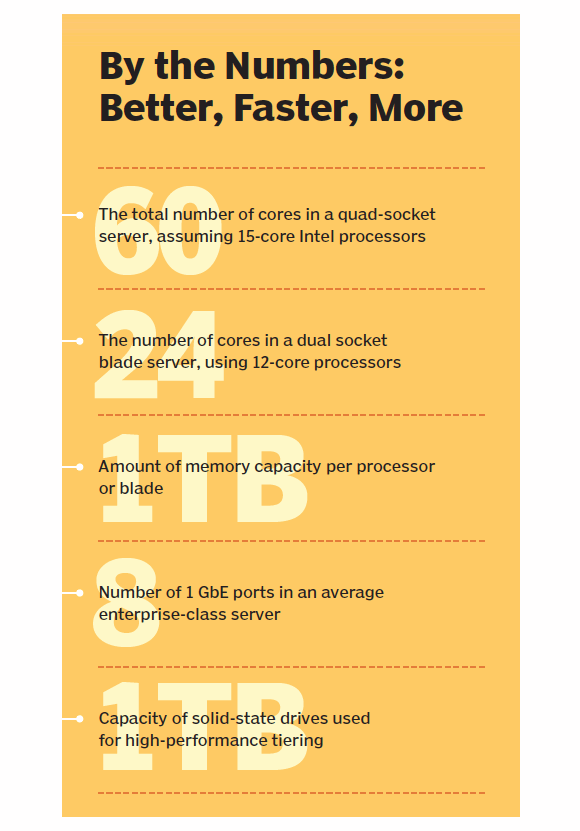

Consider the advances in traditional processor density: Single-, dual- and quad-core processors have given way to models sporting eight or more cores. For example, Intel's Xeon E7-8857 provides 12 cores, while the Xeon E7-8880L provides 15. This gives a quad-socket server like an IBM System x3850 X6 up to 60 processor cores that can be distributed across dozens of virtual machines. Even a blade server like a PowerEdge M620 can support two Xeon E5-2695 12-core processors for a total of 24 cores per blade.

This trend has also carried over to server memory. Memory modules are bursting with new capacity, boosting rack servers to capacities ranging anywhere from 128 GB to more than 1 TB with just a few gigabytes, depending on the memory modules and features (such as memory sparing, mirroring and so on). Blade servers are typically a bit more modest with total memory capacities of 32 to 64 GB per blade. Still, memory adds up fast with six, eight or more blades in the same chassis enclosure.

Storage is getting bigger and faster, but the line between memory and storage is blurring as flash memory claims a permanent place in server architectures. For example, solid state drive (SSD) devices is now a high-performance storage tier (sometimes called tier 0), ranging in capacities from 100 GB to 1 TB using the conventional serial advanced technology attachment/serial attached SCSI disk interface. For better storage performance, servers may incorporate PCIe-based solid state accelerator devices like the Fusion-io ioDrive2 products with up to 3 TB of flash storage per unit. When peak storage performance is critical, IT professionals consider terabytes of memory channel storage (MCS) devices like Diablo Technologies' DDR3 flash storage modules, which share memory slots with conventional dual in-like memory modules.

This explosive evolution of data center hardware isn't always perfect. For example, adding risers for high-end memory capacity can drive up server costs and lower performance -- similar performance penalties occur when using memory located off local blades, which is accessed across slower backplanes. These potential problems shift traditional system performance bottlenecks away from CPU-scaling limitations to memory and storage issues. Resource provisioning policies must be modified to accommodate the changing bottlenecks as data center densities increase.

"A new issue that we recently faced is managing the [input/output operations per second] at the storage tier," said Tim Noble, IT director and advisory board member at ReachIPS, noting that mixing thick storage provisioning at the hypervisor level and thin storage provisioning at the physical level causes storage buffer problems that demanded a change to storage tiering. "When the pending write buffer is full, all systems attached to the array can crash or require recovery," Noble said.

But in spite of the potential pitfalls, the underlying message is unmistakable: The mix of advanced hardware and virtualization allows far more workloads to run on just a small fraction of the hardware.

"I'm specing out a new data center that will likely run on a single blade chassis," said Chris Steffen, director of information technology at Magpul Industries Corp. "It'll contain close to 200 virtual machines, and the internal networking and SAN will all fit into a single rack. Ten years ago, the same room required nearly 2,000 square feet of data center space."

More bandwidth and connectivity

Advances in networking are important to the data center density discussion. While traditional Ethernet speeds have increased to up to 100 GbE, only a few organizations have adopted faster standards -- and those adoptions have primarily been in the network backbone of very large data centers. "I don't really see a shift to faster traditional Ethernet speeds," Steffen said. "That money can be better spent in other aspects of the enterprise."

Instead, most data centers continue to use well-established 1 GbE deployments on individual servers, focusing on greater server connectivity and Network virtualization. For example, servers like an IBM System x3950 X6 provide two expansion slots to accommodate multiple network adapters, such as two 4 x 1 GigE adapters --a potential total of eight 1 GigE ports per server. Similarly, most conventional rack servers can accommodate PCI express-based multi-port network interface cards (NICs).

When servers host a large number of virtual machines, it is usually more productive to increase the number of network ports than shift to a faster Ethernet standard port. Multiple ports increase server resiliency by preventing a single point of failure with network connectivity. Each NIC port is typically distributed to a different switch, ensuring that a switch failure doesn't halt all communication with a particular server. Alternatively, multiple ports can be bonded to provide greater bandwidth to necessary workloads. Multiple NIC ports also provide failover, allowing workloads to utilize other available ports when network disruptions occur on another port or switch.

Software-defined networking (SDN) and network functions virtualization (NFV) also affect data center networking density and management. SDN decouples switching logic from switching ports, allowing switch behavior to be analyzed, controlled and optimized centrally. In effect, the network can "learn" more effective or efficient network traffic paths and configure switches to behave accordingly. When implemented properly, SDN's traffic optimizations allow for better network utilization without the expense of greater bandwidth segments.

By comparison, NFV uses virtualization to simulate complex network functions or appliances with standard servers and switches. NFV can provide functions such as network address translation, domain name service, firewalls, intrusion detection and prevention, and caching. The goal is to replace expensive, dedicated network appliances with ordinary server-based functions, and to allow rapid deployment and scalability of those functions -- which is ideal for emerging cloud and other self-service environments.

Regardless of network speed or complexity, configuration can be extremely important in ensuring best performance across increasingly dense network interconnections. "A mismatch between server and switch configuration can cause performance issues, or worse," said Noble. The issue is even more acute as workloads move to more capable servers and additional resources are needed during peak demands.

(Not) Feeling the heat

With data center densities increasing, expect more heating problems from computing hot spots or poor ventilation. Fortunately, heating is rarely a serious problem for modern data centers -- thanks mainly to a convergence of energy efficiency and heat-management efforts that have developed over the past few years.

Definitions

Network functions virtualization, or NFV, draws on typical server virtualization techniques to put networking features and functions on virtual machines, rather than on custom hardware appliances.

gigabit Ethernet is a common enterprise network backbone, running on optical fiber at a rate of 1 billion bits (one gigabit) per second.

One key element is the improved design of modern servers and computing components. For example, processors are typically the hottest server component, but new processor designs and die fabrication techniques largely kept processor thermal levels flat, even as the core count multiplies. If an Intel Xeon E7-4809 six-core processor dissipates 105 watts, but the Xeon E7-8880L with 15 cores is rated for the same thermal dissipation at 105 watts, boosting the clock speed with a faster Xeon E7-4890 15-core model only increases the thermal power dissipation to 155 watts for the entire processor package.

At the same time, data center operating temperatures are climbing. Data centers can run at 80 or 90 degrees Fahrenheit (and even higher) with no significant impact to equipment reliability. To cope with higher temperatures, server thermal management designs now add thermal sensors and aggressive variable-speed cooling systems to remove heat from each unit. "Most dense hardware platforms move air like a jet engine through the system to keep them cool," Steffen said.

The ability to remove heat from the server is a perfect complement to virtualization, which dramatically lowers the overall server count, reducing the amount of heated air from a data center. Supporting virtualization technologies like VMware's distributed resource scheduler and distributed power management can move workloads between servers based on demand and power down unneeded servers. The net result is that data centers no longer rely on massive, power-hungry mechanical air conditioning systems to refrigerate a huge space full of running hardware. Instead, fewer highly consolidated systems make effective use of containment and cooling infrastructures, like in-row or even in-rack cooling units.

Retrofits and poor data center design choices can lead to unforeseen hotspots. "Even if you're using a Tier 4 data center, that doesn't guarantee it will be correctly designed to mitigate cooling and other operational risks," Noble said. As data center densities increase, careful system monitoring and data center infrastructure management technologies ensure proper thermal management, energy efficiency and effective computing resource use.

A licensing wrench in the works

Data center densities will increase as virtualization embraces advancing hardware features like x86 and ARM processors. However, IT experts note that hardware density and utilization can be limited by software licensing and software maintenance concerns.

"Virtualized servers consume more software licenses because they are easier to start up and stay around longer even when not used," said Noble. "But they still require software maintenance and patching regardless of whether they are used." As data centers grow and workload densities increase, licensing and patch concerns can often be mitigated through systems management tools that assist IT staff with VM lifecycle and patch-management tasks.

About the author

Stephen J. Bigelow is senior technology writer in the Data Center and Virtualization group at TechTarget. He has written more than 15 feature books on computer troubleshooting. Find him on Twitter @Stephen_Bigelow.