ustas - stock.adobe.com

IoT explained: What is the internet of things?

So, what is the internet of things? Simply put, it's machine-to-machine communication, but it's more than just smart devices. Real-time data from IoT devices is changing the world.

What is the internet of things, exactly? It is an ambiguous term, but it is fast becoming a tangible technology that can be applied in data centers to collect information on just about anything that IT wants to control.

The internet of things is essentially a system of machines or objects outfitted with data-collecting technologies so that those objects can communicate with one another. The machine-to-machine (M2M) data that is generated has a wide range of uses, but it is commonly seen as a way to determine the health and status of things -- inanimate or living.

IT administrators can use IoT for anything in their physical environment that they want information about. In fact, they already do.

In one case, IoT is being used to stymie deforestation in the Amazon rainforest. A Brazilian location-services company called Cargo Tracck places M2M sensors from security company Gemalto in trees in protected areas. When a tree is cut or moved, law enforcement receives a message with its GPS location, allowing authorities to track down the illegally removed tree.

One analyst explained IoT using the iPhone as an analogy. Disconnected third-party applications that are hosted in the cloud can be connected and users can access all sorts of data from the device, according to Sam Lucero, senior principal analyst of M2M and IoT at IHS Markit Ltd. in Tempe, Ariz.

How the internet of things works

While some consider IoT to be M2M communication over a closed network, that model is really just an intranet of things, Lucero said.

With an intranet of things, apps are deployed for a specific purpose and don't interact outside of that network. True IoT is where different applications are deployed for specific reasons and the data collected from the machines and objects being monitored are made available to third-party applications. The expectation is that true IoT will provide more value than what can be derived from secluded islands of information, Lucero said.

For IoT to work in data centers, platforms from competing vendors need to be able to communicate with one another. This requires standard APIs that all vendors and equipment can plug into, for both the systems interfaces as well as various smart devices, said Mike Sapien, a principal analyst at Ovum.

History of IoT

The term internet of things was allegedly first coined by Kevin Ashton during a presentation he made to Proctor & Gamble in 1999. Pushing the importance of RFID to company executives, Ashton titled his presentation "Internet of Things" as he wanted to incorporate one of the hottest trends of the late 90s -- the internet -- into his talk. Later that same year, Neil Gershenfeld discussed the concept of IoT in his book, When Things Start to Think, though he didn't use the exact term.

However, by the time Ashton uttered the phrase, IoT was already in the making. One of the first IoT devices was a Coke machine in the early 1980s that allowed programmers at Carnegie Mellon University to check the status of their favorite soda before visiting the machine. At Interop in 1990, John Romkey demonstrated a toaster that could be turned on and off over the internet -- simple, but an early example of an internet-connected device.

Technically, IoT evolved from M2M -- with M2M offering the connectivity that connects disparate IoT devices. IoT can also be considered an extension of supervisory control and data acquisition, software that gathers real-time data from locations to control equipment and conditions.

Fast-forward a couple decades. The internet is now readily available -- remember that in 1995, less than 1% of the world's population had internet access. As of December 2017, more than 54% of the population has internet access, with upwards of 8.5 billion smart devices connected to the internet. And depending who you listen to, the number of IoT devices could reach 20.8 billion by 2020, with total spend on smart devices and services expected to reach $3.7 trillion this year.

IBM proposed in February 2013 that its IoT protocol, called MQ Telemetry Transport, or MQTT, be used as the open standard. This would help multiple vendors participate in IoT.

"[System integrators] like [Hewlett Packard Enterprise], IBM and others are starting to open up their systems to be less restrictive, just as telecom operators are allowing different networks -- not just their own -- to be part of the IoT ecosystem," Sapien said. "But this has taken many years to happen."

Meanwhile, a number of platforms serve as the plumbing to connect systems from different vendors so that they can communicate and be managed. One such platform is Xively Cloud Services, which is LogMeIn Inc.'s public IoT platform as a service (Editor's note: Xively was acquired by Google in 2018. Xively Cloud Services is now part of the Google Cloud Platform product family). It allows IT to design, prototype and put into production any internet-connected device.

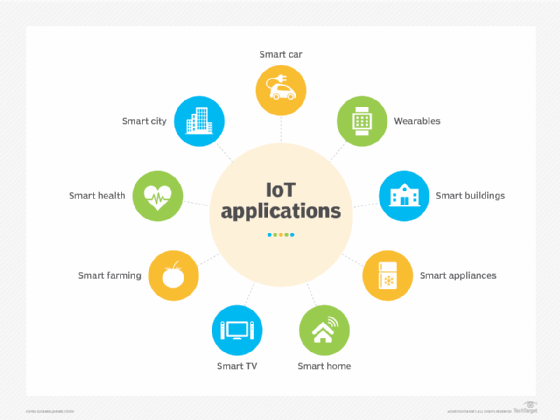

IoT use cases and applications

Looking for more information on the effects of IoT?

Read our extensive coverage on smart cities, including this article that divulges the benefits both citizens and the community can reap with the addition of smart technologies.

Expanding on the smart city use case, IoT is also transforming the transportation industry. Think beyond self-driving cars and learn how IoT is creating more efficient public transportation systems which provide not only better security, but also a better commuter experience.

The internet of things is having a major impact on manufacturing and industry. Explore how collecting data from these environments brings a number of opportunities to manufacturing and industrial organizations alike -- from reducing downtime to increasing efficiency.

Get insight on connected devices in smart homes, including how to address smart home device complexity, pre-empt negative customer experience and keep your eye on the true prize of the smart home: not the smart device, the smart home user.

Beyond smart cities, smart homes and smart manufacturing, the internet of things is revolutionizing the workplace. IoT can unleash a wealth of benefits in the office, not only by monitoring temperatures and adjusting lighting, but also to make for a happier employee.

For example, companies that have to monitor energy use might use closed, vendor-specific systems. They can use something like Xively as a secondary system to monitor heating and cooling and control energy use across multiple locations.

Over the long term, one consequence of the internet of things for the enterprise data center could be a large volume of incoming data coming that requires significant infrastructure upgrades, particularly for real-time data processing and storage, Lucero said.