How should I choose a new server hardware configuration?

It's important to consider current and future business needs when choosing a server to ensure you'll have adequate CPU, memory, storage and network resources.

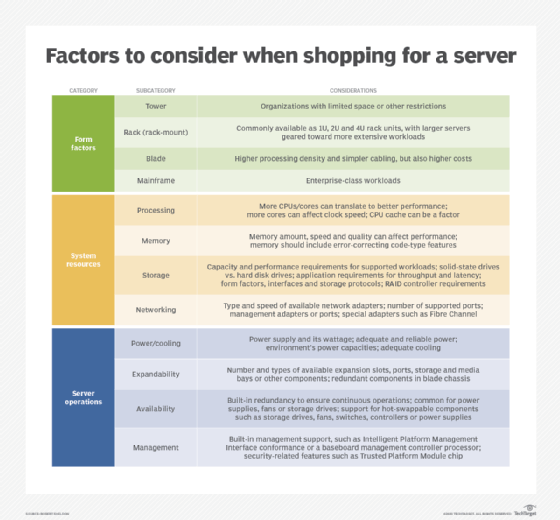

When shopping for a server, decision-makers must choose from a wide range of server hardware configurations. Not only must they consider the compute, storage and network resources, but also the server's form factor, power and cooling components, expandability, and other features that support operations and ensure the server's availability and security.

Workload requirements will drive most of the decision-making process, but other factors can also play a role. One way to evaluate a server is to group its characteristics into three categories -- form factors, system resources and system operations -- and assess potential systems based on considerations in each category.

Form factors

Servers generally come in four form factors: tower, rack (rack-mount), blade or mainframe. Most organizations opt for rack or blade servers, but those with limited space might run tower servers. In some cases, a large enterprise might choose a mainframe -- at least, for some workloads. The choice depends in part on the server environment, which can have size, power and cooling limitations. Even noise levels can be a factor in selecting a server.

Rack servers are mounted in server racks along with other components and are measured in units (U) or rack units (RU). A unit is the height that the server occupies in the rack. Rack servers generally range between 1U and 4U. The larger the rack server, the greater its processing capabilities and expandability, but the higher the cost.

Blade servers are mounted in chassis that can be fitted into server racks. They offer greater server density than rack servers because more processing power can be squeezed within a smaller space. The chassis also simplifies cabling and consolidates resources such as power supplies. However, blade servers can cost more and might require data center updates to accommodate power and cooling requirements. Even floor weight can be a factor in supporting higher-density systems.

In addition to the environment, budget and workload requirements should also be considered when choosing the form factor, as well as the organization's current infrastructure. For example, a small startup that needs only a couple servers and doesn't already own a server rack might want to stick with tower servers. In the same sense, there's no reason to invest in a powerhouse 4U rack server if an organization's applications barely justify a 2U system.

System resources

Above all, a server must be able to support its target workloads, and that means having adequate compute, storage and networking resources. Compute resources include both processing and memory. Processing is carried out by one or more CPUs, with each CPU supporting multiple cores to enable multiprocessing. For example, a server might come with two CPUs that each provide 10 cores.

More processors and cores typically translate to better performance, but CPU clock speed is also an important consideration. The faster the speed, the more instructions that can be executed per second. However, increasing the number of cores on a CPU can mean slower clock speeds, so a balance must be achieved, based on workloads and budget. Another factor is the CPU's cache, which is implemented differently between CPUs and can also play a role in performance.

A server also needs adequate memory to support its workloads and to support the OS, security software and other system applications. In some cases, all it takes to improve a computer's overall performance is to increase the amount memory, which minimizes paging and provides the CPU with faster access to instructions. However, memory speed and quality are also important factors. In addition, server memory should include fault-tolerant capabilities such as error-correcting code (ECC).

Most servers come with some type of internal storage, but the amount needed will depend on the specific circumstances. For example, an organization might use a storage area network or other external system for the bulk of its data. Decision-makers must determine how much data will be stored on the server, factoring in system, application and user data, where appropriate.

At the same time, decision-makers must consider storage performance. For example, they must decide whether to use solid-state drives (SSDs), hard disk drives (HDDs) or some type of hybrid configuration, taking into account application throughput and latency requirements. To this end, they should evaluate the storage form factors, interfaces and protocols that a server supports. In addition, many organizations will want their servers to include hardware RAID controllers to help protect data and improve performance.

Another consideration when evaluating server hardware configuration is networking capabilities -- specifically, in terms of the type and speed of available network adapters and their number of supported ports. For example, a server might include a single 1 Gigabit Ethernet adapter that comes with two ports, or it might include two 10 GbE adapters that each come with four ports. A server might also provide a dedicated management port or come with a special adapter, such as one for connecting to a Fibre Channel storage network.

Server operations

The server operations category incorporates those features that tend to apply to server-wide operations or characteristics. For example, a server's power supply and cooling fans should be able to adequately and reliably support operations. Decision-makers should also assess how a server's power consumption might affect available power sources. For instance, a data center might have limits on how much power is available for each rack.

Another important consideration is a server's expandability, in terms of the number and types of available expansion slots, ports, storage and media bays, or other components that control what can be added to the server before it reaches capacity. For example, a server might offer only a single PCIe 2.0 slot, or it might provide four PCIe slots that are a mix of PCIe 2.0 and 3.0. Decision-makers should also evaluate a server's ability to support additional processing, memory and networking resources, should they be required for future workloads.

A server's built-in redundancy should also be evaluated to ensure the system can continue to operate even if a component fails. This can be particularly important with power supplies, fans and storage drives. For blade servers, some of that redundancy is in the chassis, such dual power supplies or fans. Redundancy requirements will depend on the supported workloads, with mission-critical workloads being the highest priority.

To minimize downtime, some organizations might also want servers that provide hot-swappable components, which can be added or replaced without shutting down the server. Servers often support hot-swappable storage drives, but some might also provide hot-swappable fans, switches, controllers or even power supplies. Like redundancy, the need for hot-swappable components will depend on the supported workloads.

A server should also be evaluated for its built-in management capabilities. For example, many servers support the Intelligent Platform Management Interface specification, which facilitates system monitoring and management. On the other hand, some servers might include a baseboard management controller, a specialized service processor for monitoring a system's physical state. Just as important are the integrated security features, such as a Trusted Platform Module chip for storing the RSA encryption keys that support hardware authentication.

Choosing server hardware

There's no magic formula for choosing a server hardware configuration, and the final selection will depend on supported workloads and other variables that affect server operations. The challenge for decision-makers is to find a system that will meet current and future needs without wasting money on hardware they don't need or ending up with a system that quickly becomes obsolete.

To this end, they must consider several factors related to form factors, system resources and server operations, always keeping their workloads at the forefront of their minds.